DocAssist is built to production standards — evaluated before optimised, cost-profiled, and deployed with structured observability. A summary for technical reviewers.

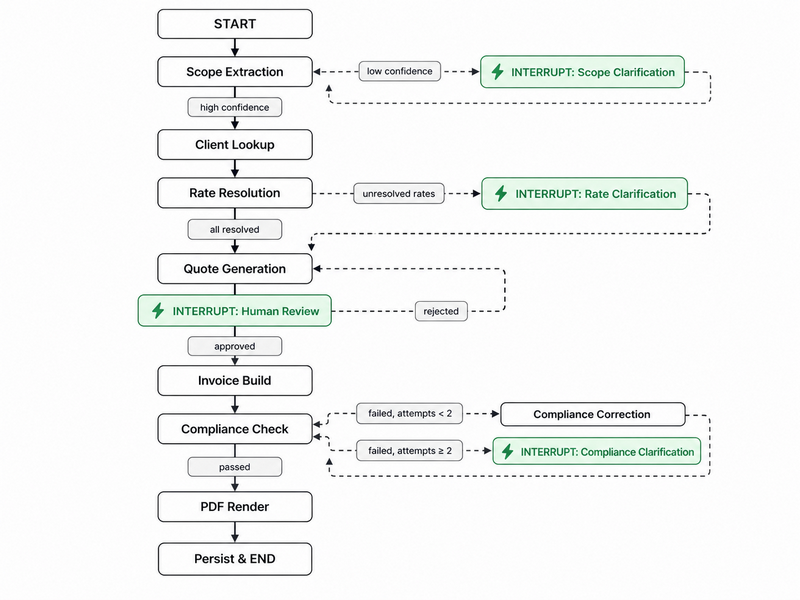

LangGraph Stateful Pipeline

- 10-node directed graph with 4 interrupt gates — scope, rate, and compliance clarification plus human review

- Full state checkpointed to SQLite; graph suspends and resumes across HTTP requests, hours apart if needed

- Human-in-the-loop is structural — the graph cannot produce an invoice without an explicit owner approval

Eval-First Development

- Eval harness committed before any LLM code (test-first approach)

- 3 layers: deterministic tests (§11 field presence, VAT arithmetic, UID regex), 24 annotated gold pairs (8 per industry), adversarial cases (vague input must trigger clarification, not hallucinate)

- 26/28 tests green — 100% of active tests passing; 2 xfailed stubs

Model Selection — Own Numbers

- Haiku, Sonnet, and 3 local models (gpt-oss-20b, Gemma-4-26B, Qwen2.5-14B) benchmarked on extraction F1

- Haiku chosen: F1 0.94, 35s/24 pairs — identical to Sonnet at half the latency

- Quote generation: Haiku €0.0008/doc vs Sonnet €0.0029/doc — 3.6× cheaper, indistinguishable output length

Deterministic Compliance Engine

- §11 UStG rules engine checks all 11 required invoice fields — LLM never touches the legal requirements path

- Field list verified against ebInterface 6.1 XSD, the Austrian Chamber of Commerce's machine-readable invoice standard

- Haiku correction loop capped at 2 retries; non-compliant document is never returned

Prompts as Versioned Code

- Prompts in

/prompts/{node}/v{n}.md, version pinned per node in models.yaml

- CHANGELOG tracks version → eval metric delta → rationale; extraction went through 3 versions

- Swapping model or prompt version is a one-line config change — no code change required

Observability & Cost

- Per-node SQLite logging: latency, model, input/output tokens, cost (EUR) — canned ops queries included

- LangSmith tracing per request (EU endpoint,

europe-west3)

- Full pipeline profiled: €0.007 avg/doc, 4.1s p50 end-to-end — well under €0.10 target